Overview

Over the past year I have been working on a project to migrate a 4-node AG with multi-terabyte databases, to another AG in a Cloud environment. To add to the fun, the downtime had to be kept to an absolute minimum.

The solution for this is a Distributed Availability Group (DAG), which basically (very basically) links two Availability Groups (AGs) via their Listeners.

For small systems, it can also copy the databases from one AG to the other. However, in this case this was not possible, so some additional work was required to prepare the target AG beforehand.

Test Environment

For this demonstration I have two, two-node AGs with their own Domain Controllers (DCs). I covered the basics of creating an AG within Hyper-V in this article. These AGs now also have a Listener, which is covered here.

So, the start looks like this:

Figure 1: The two two-node AGs (in different domains) with Domain Controllers

AG2 is the ‘target’ AG and at this time has no user databases installed.

The other AG has only one database, for the purpose of this demonstration.

Figure 2: Source AG

Terminology

Within a pairing of AGs (via a DAG) there is only one Primary node. That is the Primary node of the AG that is sending the data – effectively the ‘controlling’ AG and within SQL is called the PRIMARY_GLOBAL.

This Primary node sends data to the replicas on the same AG (as normal) but also to the paired node on the ‘receiving’ AG. This node (on the ‘receiving AG’) is called the ‘Forwarder’. It then replicates the data to the other nodes on the ‘receiving’ AG.

First Steps – the Certificate

The Primary Node

To enable the two AGs to communicate, across the DAG, each node that can be either a Primary or Forwarder will require a certificate.

The minimum configuration will be a certificate on the Primary node and the Forwarder node. However, if you need to cater for a failover within either of the AGs (as opposed to a DAG failover, which is different) than any other nodes that can potentially be the Primary or Forwarder need the certificate installing too.

The certificate will require a login and user to be associated with it and then it will be associated with the mirroring endpoint.

Before creating the certificate, the SQL Server instance requires a database master key, so create that if you don’t already have one.

Listing 1: Create Database Master Key

USE master;

GO

CREATE MASTER KEY ENCRYPTION BY PASSWORD = '<password>';

GO

Then create the certificate and create a backup of that certificate.

In my case I backup the certificate to the C:\Temp folder and use the same folder on the other nodes.

Listing 2: Create and Backup Certificate

USE master;

GO

/*Create a certificate for the DAG*/

CREATE CERTIFICATE dag_certificate

WITH SUBJECT = 'DAG Cert';

GO

/*Backup the certificate*/

BACKUP CERTIFICATE dag_certificate

TO FILE = 'C:\Temp\dag_certificate.cer'

WITH PRIVATE KEY (

FILE = 'C:\Temp\dag_certificate.pvk',

ENCRYPTION BY PASSWORD = '<password>'

);

GO

Create the login and user that will be associated with the certificate.

Listing 3: Create and Certificate’s Login and User

USE master;

GO

CREATE LOGIN dag_login WITH PASSWORD = '<password>';

CREATE USER dag_user FOR LOGIN dag_login;

Having created the certificate and user, it now needs to be associated with the mirroring endpoint.

When you replace the authentication mode, replication within the AG will have issues until all replica nodes have the same authentication mode as the Primary node. One way to prevent this is to have multiple authentication modes specified.

The default authentication mode for the mirroring endpoint is ‘WINDOWS NEGOTIATE’. It is possible to have several modes listed, and if one fails SQL will try the next on the list. Therefore, to avoid replication errors within the AG, update the mirroring endpoint to use the certificate and the existing mode.

Listing 4: Alter the Mirroring Endpoint to use the Certificate

/*Alter the endpoint to use the certificate.

Using two authentication methods ensures replication continues while

the endpoints are changed. Sequence is important - certificate first and then

(if it fails) WINDOWS NEGOTIATE (as current).*/

ALTER ENDPOINT [Hadr_endpoint]

FOR DATA_MIRRORING(

AUTHENTICATION = CERTIFICATE [dag_certificate] WINDOWS NEGOTIATE);

Then grant connection permissions to the login that we associated with the certificate.

Listing 5: Grant CONNECT to the Login for the mirroring endpoint

/*Grant CONNECT permission to the new user, for this endpoint*/

GRANT CONNECT ON ENDPOINT::[Hadr_Endpoint] to [dag_login];

GO

Generally, that is all that is required for the endpoint, but on occasion it might need to be restarted. It does no harm to restart it anyway.

Listing 6: Restart the endpoint

/*A restart of the endpoint might not be required, but does no harm*/

ALTER ENDPOINT [Hadr_Endpoint] STATE = STARTED;

GO

All Other Nodes

The certificate now needs to be restored to all other nodes that could become either Primary nodes or Forwarders.

The execution is the same for all of these – restore the certificate and then create the login and user, and then update the mirroring endpoint. The only difference from the installation on the Primary node is that the certificate is restored, instead of created.

Listing 7: The Certificate Setup on the Other Nodes

/*We need a Master Key on the Instance*/

USE master;

GO

CREATE MASTER KEY ENCRYPTION BY PASSWORD = '<password>';

GO

/*Create login and user that will be associated with the certificate*/

USE master;

GO

CREATE LOGIN dag_login WITH PASSWORD = '<password>';

CREATE USER dag_user FOR LOGIN dag_login;

/*Restore the certificate*/

CREATE CERTIFICATE dag_certificate

AUTHORIZATION dag_user

FROM FILE = 'C:\Temp\dag_certificate.cer'

WITH PRIVATE KEY (

FILE = 'C:\Temp\dag_certificate.pvk',

DECRYPTION BY PASSWORD = '<password>'

);

GO

/*Alter the endpoint to use the certificate*/

ALTER ENDPOINT [Hadr_endpoint]

FOR DATA_MIRRORING(

AUTHENTICATION = CERTIFICATE [dag_certificate] WINDOWS NEGOTIATE);

/*Grant CONNECT permission to the new user, for this endpoint*/

GRANT CONNECT ON ENDPOINT::[Hadr_Endpoint] to [dag_login];

GO

/*A restart of the endpoint might not be required, but does no harm*/

ALTER ENDPOINT [Hadr_Endpoint] STATE = STARTED;

GO

Seeding – Automatic or Manual?

When you create a database on and AG, if you have automatic seeding configured, SQL will copy the database to all nodes on the AG and attach them. If you have manual seeding set, then you must restore from backups taken from the Primary node and attach the database yourself. The DAG has the same abilities.

In this demonstration I am showing the manual configuration. My experience involved an AG with very large databases, and it was simply not an option to use automatic seeding – the copy of the data across the distances involved would simply have been too long and the overhead to the network too large.

Preparing the Database

Because (in this article) we are using manual seeding throughout, it is necessary to prepare the ‘target’ AG ourselves. This is done by taking a Full backup from the ‘source’ AG and restoring it on all of the ‘target’ AG nodes, with NORECOVERY – even on the target’s Primary node (which will become the Forwarder, once the DAG has been created). Because the DAG will be transferring data from the Primary node to the Forwarder, the database must be waiting for further data – just as if it were on a replica node of an AG, which effectively, it is. At this point the databases on the ‘target’ servers are not part of an AG.

Having restored the full backup, we then bring it as up to date as we can, by restoring the necessary log backups from the source AG, again with NORECOVERY.

Notice that in the screenshot below, there are no databases attached to the AG. You cannot attach an AG that has databases already contained, to a DAG that is to be configured as the Forwarder.

Figure 3: Restored Databases on Target Servers

Creating the Distributed AG

Now that the databases are ready, the DAG can be created.

The DAG connects the Listeners via the mirroring endpoint port, so you need the Listener name and the port number on the mirroring endpoint.

The Listener name is easy to locate within SSMS and the port can be obtained by simply scripting out the Database Mirroring endpoint.

Figure 4: Finding the Listener Details for the DAG

On the source AG, we need to create the DAG and the join the target AG to it.

Listing 8: Creating the DAG (on the source AG Primary Node)

USE master;

GO

CREATE AVAILABILITY GROUP DistAG

WITH (DISTRIBUTED)

AVAILABILITY GROUP ON

N'SourceAG' WITH(

LISTENER_URL = N'tcp://SourceAGLsnr:5022',

AVAILABILITY_MODE = ASYNCHRONOUS_COMMIT,

FAILOVER_MODE = MANUAL,

SEEDING_MODE = MANUAL

),

N'Azure' WITH (

LISTENER_URL = N'tcp://AzureLsnr:5022',

AVAILABILITY_MODE = ASYNCHRONOUS_COMMIT,

FAILOVER_MODE = MANUAL,

SEEDING_MODE = MANUAL

);

GO

Listing 9: Adding the Target AG to the DAG (on the Target AG Primary Node)

USE master;

GO

ALTER AVAILABILITY GROUP DistAG

JOIN AVAILABILITY GROUP ON

N'SourceAG' WITH(

LISTENER_URL = N'tcp://SourceAGLsnr:5022',

AVAILABILITY_MODE = ASYNCHRONOUS_COMMIT,

FAILOVER_MODE = MANUAL,

SEEDING_MODE = MANUAL

),

N'Azure' WITH (

LISTENER_URL = N'tcp://AzureLsnr:5022',

AVAILABILITY_MODE = ASYNCHRONOUS_COMMIT,

FAILOVER_MODE = MANUAL,

SEEDING_MODE = MANUAL

);

GO

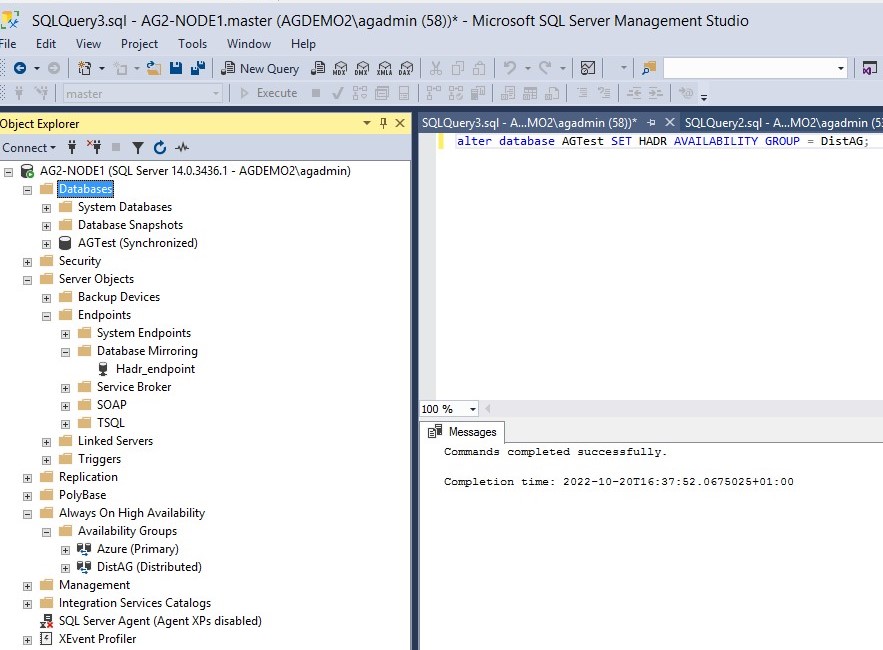

After executing the two commands detailed above, you should now have a DAG listed under ‘Availability Groups’ in SSMS.

Note that if you expand the DAG details, all that SSMS can show you is the AGs that are connected. There are no details of databases or Listeners. Similarly, you cannot use the ‘Show Dashboard’ feature – it is either greyed out or will show an error.

Figure 5: The DAG Displayed in SSMS

Attaching the Databases

Now that the DAG has been created, the databases that were restored on the target AG (named ‘Azure’ in this article) need to be attached.

On the Forwarder there are two options and the end result is the same. The database can be attached to the ‘DistAG’ AG (in other words – the DAG), or the AG that resides on that instance.

Either way, it will become part of the Forwarder’s AG and therefore part of the DAG.

Having attached it on the Forwarder, you will need to repeat that action on each node of the AG.

Figure 6: The Database attached to the AG and DAG

/

The AG Dashboard will now show a healthy AG, although it is being ‘supplied’ with updates via the DAG.

Figure 7: AG Dashboard of Target AG (‘Azure’)

DAG Failover

Another advantage of using a DAG is that you can switch the AGs over and keep them in sync. It is therefore possible to move the processing to the new AG and have it all replicated to the old AG and if things don’t appear to work as expected, you can revert to the original AG.

This gives the advantage of a rollback process – always a good thing with a complicated change.

There are two steps to the failover – setting the current Primary server to Secondary and then issuing the command to failover.

To failover with a DAG, the current ‘Source’ AG needs to be manually set to SECONDARY and then a manual failover is executed (from the AG that will become the Primary AG), the option to allow data loss is required, although of course this doesn’t mean that there will be data loss.

Listing 10: DAG Failover

USE master;

GO

ALTER AVAILABILITY GROUP [DistAG] SET (ROLE = SECONDARY);

GO

--Then, switch to the Azure PRIMARY

USE master;

GO

ALTER AVAILABILITY GROUP [DistAG] FORCE_FAILOVER_ALLOW_DATA_LOSS;

GO

Although the failover is quite quick, it might take a short amount of time for the queries to report that everything is fine. I believe that the DMVs aren’t updated instantly, so their information might lag behind the true picture for a short while.

Listing 11: Queries for the DAG status

/*Show connected status and any errors from the Primary*/

SELECT r.replica_server_name,

r.endpoint_url,

rs.connected_state_desc,

rs.last_connect_error_description,

rs.last_connect_error_number,

rs.last_connect_error_timestamp

FROM sys.dm_hadr_availability_replica_states rs

INNER JOIN sys.availability_replicas r

ON rs.replica_id = r.replica_id;

-- Shows active AGs - will show DAG from Primary node too

SELECT

ag.[name] AS [AG Name],

ag.Is_Distributed,

ar.replica_server_name AS [Replica Name]

FROM sys.availability_groups AS ag

INNER JOIN sys.availability_replicas AS ar

ON ag.group_id = ar.group_id

GO

-- Shows AG details, from Priamry of source AG

SELECT

ag.[name] AS [Distributed AG Name],

ar.replica_server_name AS [Underlying AG],

dbs.[name] AS [Database],

ars.role_desc AS [Role],

drs.synchronization_health_desc AS [Sync Status],

drs.log_send_queue_size,

drs.log_send_rate,

drs.redo_queue_size,

drs.redo_rate

FROM sys.databases AS dbs

INNER JOIN sys.dm_hadr_database_replica_states AS drs

ON dbs.database_id = drs.database_id

INNER JOIN sys.availability_groups AS ag

ON drs.group_id = ag.group_id

INNER JOIN sys.dm_hadr_availability_replica_states AS ars

ON ars.replica_id = drs.replica_id

INNER JOIN sys.availability_replicas AS ar

ON ar.replica_id = ars.replica_id

WHERE ag.is_distributed = 1

GO

---More in-depth details, results appropriate to the node it runs on

SELECT

ag.name AS 'AG Name',

ag.is_distributed,

ar.replica_server_name AS 'AG',

dbs.name AS 'Database',

ars.role_desc,

drs.synchronization_health_desc,

drs.log_send_queue_size,

drs.log_send_rate,

drs.redo_queue_size,

drs.redo_rate,

drs.suspend_reason_desc,

drs.last_sent_time,

drs.last_received_time,

drs.last_hardened_time,

drs.last_redone_time,

drs.last_commit_time,

drs.secondary_lag_seconds

FROM sys.databases dbs

INNER JOIN sys.dm_hadr_database_replica_states drs

ON dbs.database_id = drs.database_id

INNER JOIN sys.availability_groups ag

ON drs.group_id = ag.group_id

INNER JOIN sys.dm_hadr_availability_replica_states ars

ON ars.replica_id = drs.replica_id

INNER JOIN sys.availability_replicas ar

ON ar.replica_id = ars.replica_id

--WHERE ag.is_distributed = 1

GO

---Endpoint and failover etc details

SELECT

ag.name AS group_name,

ag.is_distributed,

ar.replica_server_name AS replica_name,

ar.endpoint_url,

ar.availability_mode_desc,

ar.failover_mode_desc,

ar.primary_role_allow_connections_desc AS allow_connections_primary,

ar.secondary_role_allow_connections_desc AS allow_connections_secondary,

ar.seeding_mode_desc AS seeding_mode

FROM sys.availability_replicas AS ar

JOIN sys.availability_groups AS ag

ON ar.group_id = ag.group_id

GO

Having completed the DAG failover, the ‘Azure’ AG now has the Primary node and the ‘SourceAG’ AG now has the Forwarder. The entire AG has effectively moved from one set of servers to another, while still being replicated across to the ‘old’ location.

Once you are happy that the new location is suitable, you can remove the DAG by issuing the ‘DROP AVAILABILITY GROUP’ command against the DAG.

At this point the two AGs will no longer be connected and will then be independent AGs, no longer replicating between each other. You can then drop the old AG, as it should no longer be required.

Conclusion

The Distributed Availability Group is an effective way to transfer an AG with the minimum amount of disruption. It is very easy to use when the two AGs are in the same domain but requires a little more work when they are in different domains.

There can be some things to watch out for that can impact the performance of the DAG and these will be covered in a future article.

References

What is a Distributed Availability Group? (Microsoft Learn)

Configure a Distributed Availability Group (Microsoft Learn)

Msg 927, Level 14, State 3, Line 26

Database ‘xxxx’ cannot be opened. It is in the middle of a restore.

I am confused why I am getting this error when attempting to run

ALTER DATABASE [xxxx] SET HADR AVAILABILITY GROUP = [dAG];

or

ALTER DATABASE [xxxx] SET HADR AVAILABILITY GROUP = [secondary_AG];